Understanding the Partial Attention Model: Revolutionizing Deep Learning

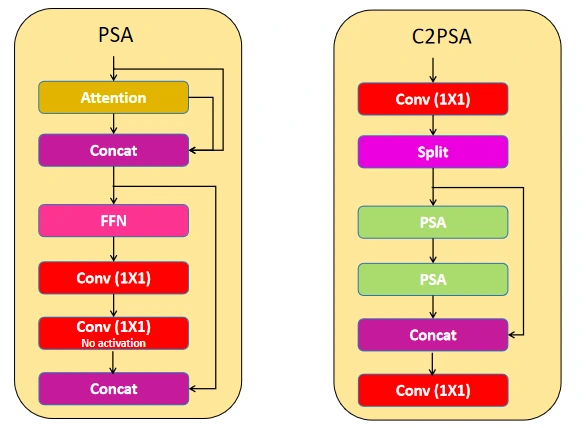

In the realm of deep learning, attention mechanisms have revolutionized the way we approach sequential pattern learning. By discriminating data based on relevance and importance, attention mechanisms have pushed the boundaries of state-of-the-art performance in advanced generative artificial intelligence models. However, as the complexity of models grows, the traditional attention mechanism may not be sufficient to capture the most informative features from complex inputs. This is where the partial attention model comes into play, offering a novel approach to attention-based models.What is the Partial Attention Model?

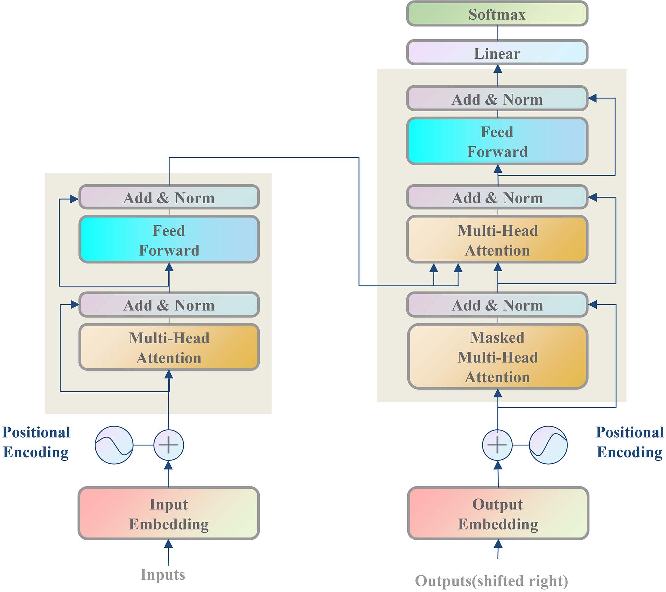

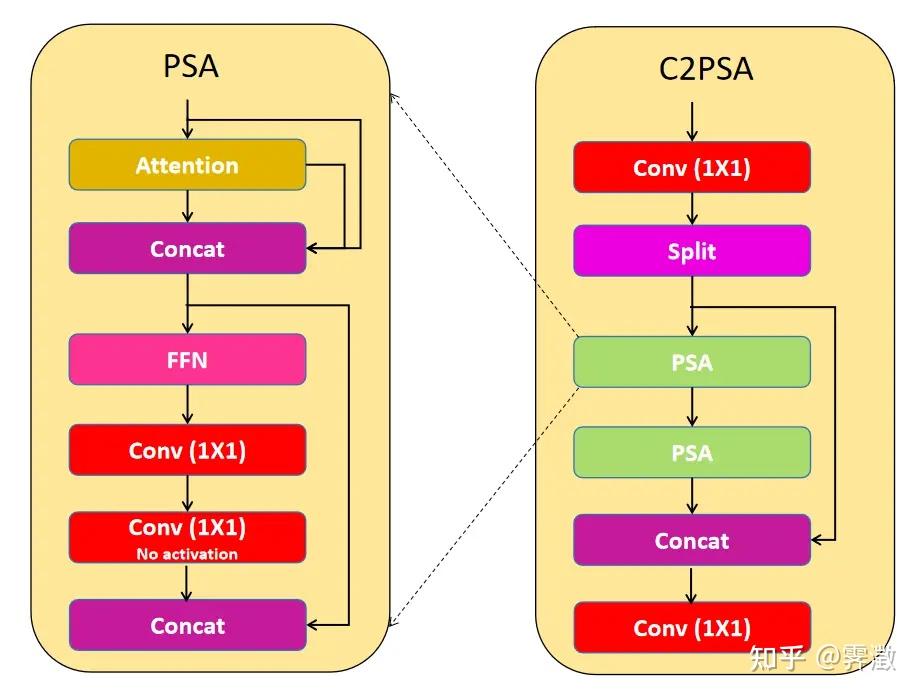

The partial attention model is a type of attention mechanism that applies an additional attention operation to each layer, where query vectors from the full sequence attend to key/value vectors derived solely from the source portion, processed through a learned transformation (Fp network). This maintains a persistent connection to the original prompt throughout generation. The partial attention model is particularly useful in scenarios where the input data has a long-range dependency structure, and the traditional attention mechanism may not be able to capture the relevant features.Advantages of the Partial Attention Model

The partial attention model offers several advantages over traditional attention mechanisms: * Improved feature capturing: The partial attention model can capture informative features from important regions and partially learns information from background regions, separately. * Efficient computation: The partial attention model reduces the computational cost compared to traditional attention mechanisms, making it suitable for large-scale applications. * Scalability: The partial attention model can be easily integrated into existing state-of-the-art models, enabling them to capture complex patterns in data.Applications of the Partial Attention Model

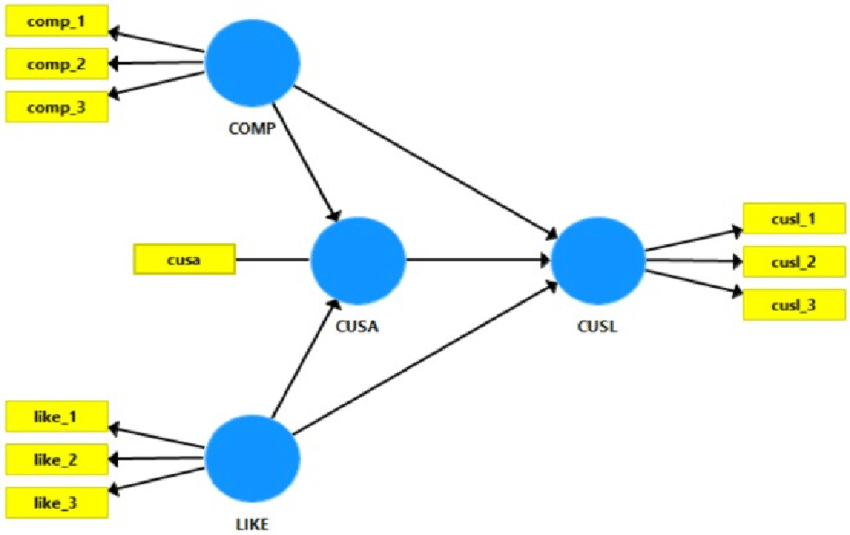

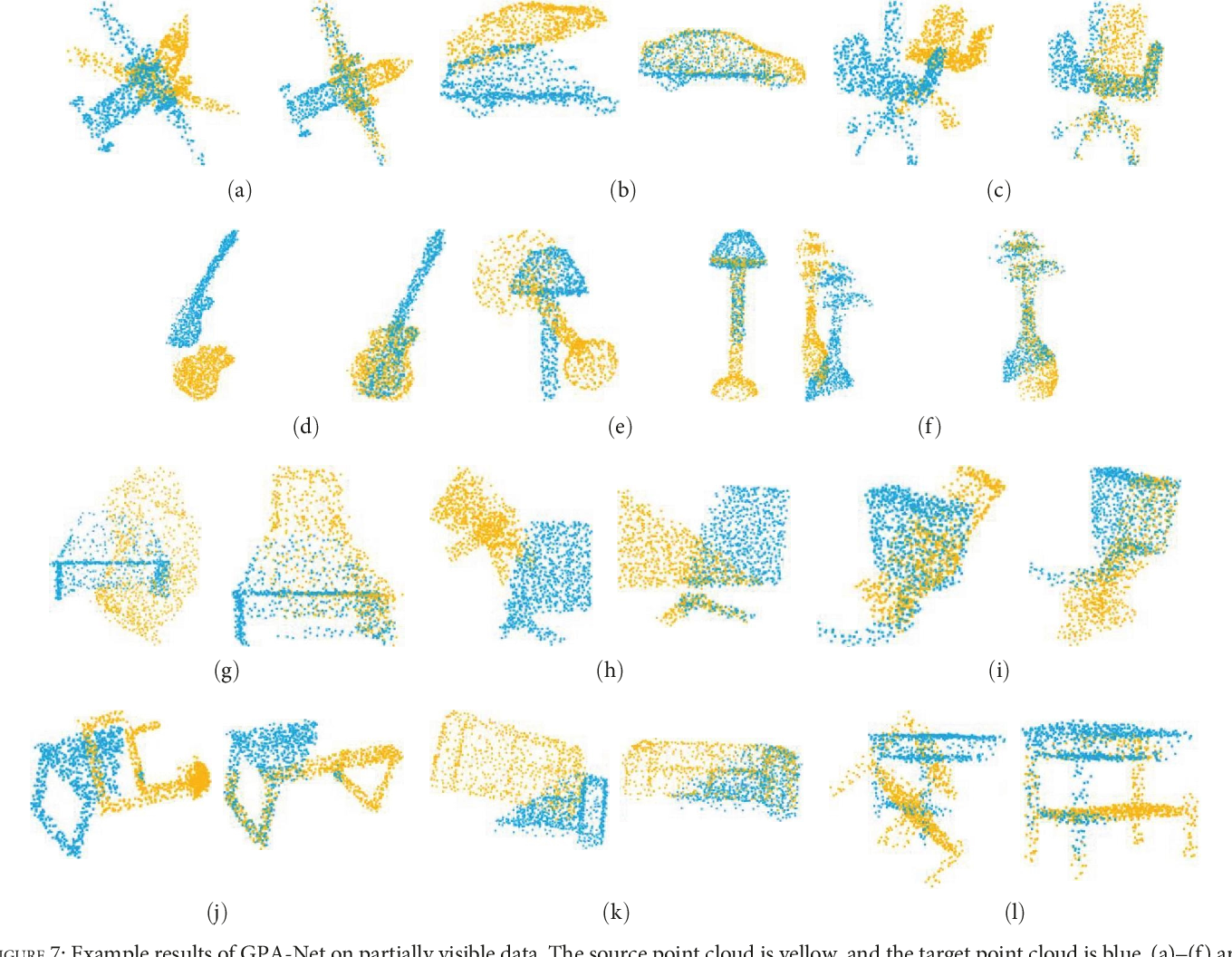

The partial attention model has various applications in different domains: * Deep reinforcement learning: The partial attention model can be used in multi-agent safe control, where each agent attends to relevant features from the environment. * Computer vision

![[2503.03148] Partial Convolution Meets Visual Attention [2503.03148] Partial Convolution Meets Visual Attention](https://www.mdpi.com/bioengineering/bioengineering-11-00549/article_deploy/html/images/bioengineering-11-00549-g005-550.jpg)